PumaMesh moves large payloads fast without turning off control.

The benchmark story is simple: PumaMesh sustained 25.8 Gbps across a long-distance cloud path while keeping security and governance in the movement path.

For buyers replacing legacy transfer tools, the proof matters because speed, encryption, policy, and audit need to work together instead of becoming separate projects.

Use the network you already pay for.

Legacy transfer tools often leave long-distance links underused. PumaMesh was built so large files, AI payloads, software artifacts, and regulated data can move quickly while policy and evidence stay attached.

Uses the Link You Pay For

Saturate the WAN, not a single TCP pipeThe transport is designed to use the full capacity of long-haul links — instead of the single-pipe TCP-era ceiling that traps legacy tools.

Open Standards Base

No proprietary wire protocol to trustNo closed protocol, no black box. Security and network teams can reason about the transport directly.

Governance In the Path

Speed without weaker controlsClassification, ABAC, policy, and audit all stay in the loop. Faster movement does not mean looser governance.

AWS cross-Pacific path with uncompressible data

The benchmark runs on real cloud infrastructure with real distance. No lab tricks, no synthetic compression gains — the result shows transport behavior on a 135 ms link.

Environment

Ohio us-east-2 to Tokyo ap-northeast-1AWS VPC peering on 25 Gbps ENA networking, 9001 MTU jumbo frames, and 96 vCPU / 185 GB RAM instances on each side.

Test Data

Incompressible files from 100 MB through 500 GBFiles came from /dev/urandom, so the result shows transport behavior — not synthetic compression gains.

Transport

Purpose-built for distributed dataPumaMesh's transport is designed to break past the TCP-era ceiling on long-haul transfer — so data moves at link speed even when it lives continents away from the user.

Moved more than the link should allow

On AWS, PumaMesh sustained throughput so high it burst above the nominal 25 Gbps ENA ceiling — moving more data than a strict 100% utilization number should permit.

PumaMesh crossed the Pacific with 1 TB in roughly six minutes, bursting above the 25 Gbps link and holding near line rate throughout — not hours the way SCP-class tools take.

What legacy TCP tools do on the same path

Same 25 Gbps link, same 135 ms RTT, same uncompressible data. TCP-era tools stay trapped far below line rate — the gap is the protocol, not a tuning flag.

SCP

Single-stream TCP plateaued at 116 MbpsSCP used a fraction of the 25 Gbps link. That is why long-haul transfer is still painful when teams rely on default SSH tools.

rsync

rsync plateaued at 113 MbpsCompression overhead on uncompressible data made rsync slightly worse than SCP. The limit is the protocol — not a missing tuning flag.

boto3 multipart

Parallel TCP plateaued near 2.2 GbpsMultipart upload beats single-stream TCP by a lot, but still falls well short of PumaMesh on the same path.

Why these benchmarks matter — and why security in the path does not cost throughput

The transport runs over a post-quantum mesh underlay with ABAC evaluated at every transfer and tamper-evident audit chained on every hop. The benchmark numbers are the numbers under load — not the numbers without governance.

Post-quantum on every hop

100% post-quantum in flight. wolfSSL 5.9.1 in the cryptographic stack. Relay never decrypts. See the Mesh →

Content-aware routing

ABAC on file attributes decides where each payload can land — before bytes leave the source.

Line rate over distance

25.8 Gbps sustained on 135 ms RTT — above the nominal 25 Gbps ENA ceiling.

Evidence as byproduct

Every run is CMMC, FedRAMP, and EU AI Act evidence — not a separate audit project.

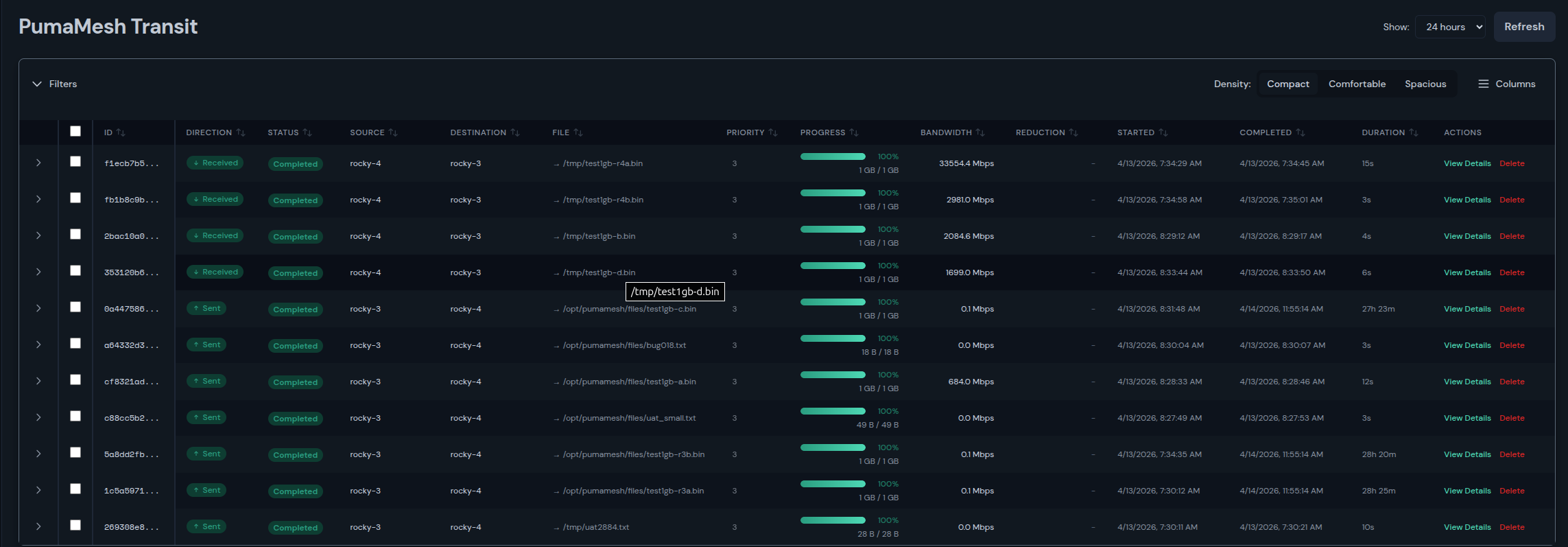

The result lives in the console teams actually use

Transit shows bandwidth, file size, duration, direction, and completion state in the product. The Fabric view shows the topology behind every transfer. The proof is operational — not a lab artifact.

Transit

Per-transfer telemetry in-productBandwidth, file size, duration, direction, and completion state show up next to the transfer — no separate dashboard to stitch together.

Fabric

Topology behind every transferThe Fabric view shows distributed nodes, long-haul paths, and the network context behind each throughput result.

Audit

Every run is evidenceTransfers are logged with operator, policy, and classification context — so performance results double as compliance evidence.